publications

publications by categories in reversed chronological order. generated by jekyll-scholar.

2025

- arXivA Bayesian Nonparametric Perspective on Mahalanobis Distance for Out of Distribution DetectionRandolph W. Linderman , Yiran Chen , and Scott W. Linderman2025

Bayesian nonparametric methods are naturally suited to the problem of out-of-distribution (OOD) detection. However, these techniques have largely been eschewed in favor of simpler methods based on distances between pre-trained or learned embeddings of data points. Here we show a formal relationship between Bayesian nonparametric models and the relative Mahalanobis distance score (RMDS), a commonly used method for OOD detection. Building on this connection, we propose Bayesian nonparametric mixture models with hierarchical priors that generalize the RMDS. We evaluate these models on the OpenOOD detection benchmark and show that Bayesian nonparametric methods can improve upon existing OOD methods, especially in regimes where training classes differ in their covariance structure and where there are relatively few data points per class.

2024

- BigDataOSR-ViT: A Simple and Modular Framework for Open-Set Object Detection and DiscoveryMatthew Inkawhich , Nathan Inkawhich , Hao Yang , Jingyang Zhang , Randolph Linderman, and Yiran ChenIn 2024 IEEE International Conference on Big Data (BigData) , Dec 2024

An object detector’s ability to detect and flag novel objects during open-world deployments is critical for many real-world applications. Unfortunately, much of the work in open object detection today is disjointed and fails to adequately address applications that prioritize unknown object recall in addition to known-class accuracy. To close this gap, we present a new task called Open-Set Object Detection and Discovery (OSODD) and as a solution propose the Open-Set Regions with ViT features (OSR-ViT) detection framework. OSR-ViT combines a class-agnostic proposal network with a powerful ViT-based classifier. Its modular design simplifies optimization and allows users to easily swap proposal solutions and feature extractors to best suit their application. Using our multifaceted evaluation protocol, we show that OSR-ViT obtains performance levels that far exceed state-of-the-art supervised methods. Our method also excels in low-data settings, outperforming supervised baselines using a fraction of the training data.

2023

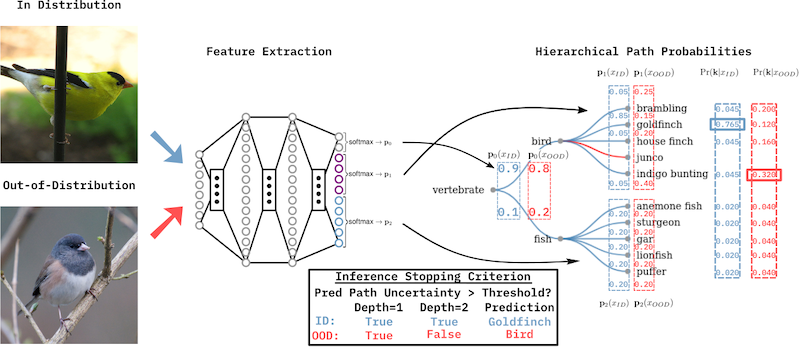

- Fine-grain Inference on Out-of-Distribution Data with Hierarchical ClassificationRandolph Linderman, Jingyang Zhang , Nathan Inkawhich , Hai Li , and Yiran ChenIn Proceedings of The 2nd Conference on Lifelong Learning Agents , 22–25 aug 2023

Machine learning methods must be trusted to make appropriate decisions in real-world environments, even when faced with out-of-distribution (OOD) samples. Many current approaches simply aim to detect OOD examples and alert the user when an unrecognized input is given. However, when the OOD sample significantly overlaps with the training data, a binary anomaly detection is not interpretable or explainable, and provides little information to the user. We propose a new model for OOD detection that makes predictions at varying levels of granularity—as the inputs become more ambiguous, the model predictions become coarser and more conservative. Consider an animal classifier that encounters an unknown bird species and a car. Both cases are OOD, but the user gains more information if the classifier recognizes that its uncertainty over the particular species is too large and predicts “bird” instead of detecting it as OOD. Furthermore, we diagnose the classifier’s performance at each level of the hierarchy improving the explainability and interpretability of the model’s predictions. We demonstrate the effectiveness of hierarchical classifiers for both fine- and coarse-grained OOD tasks.

- WACVMixture Outlier Exposure: Towards Out-of-Distribution Detection in Fine-Grained EnvironmentsJingyang Zhang , Nathan Inkawhich , Randolph Linderman, Yiran Chen , and Hai LiIn Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision (WACV) , Jan 2023

Many real-world scenarios in which DNN-based recognition systems are deployed have inherently fine-grained attributes (e.g., bird-species recognition, medical image classification). In addition to achieving reliable accuracy, a critical subtask for these models is to detect Out-of-distribution (OOD) inputs. Given the nature of the deployment environment, one may expect such OOD inputs to also be fine-grained w.r.t. the known classes (e.g., a novel bird species), which are thus extremely difficult to identify. Unfortunately, OOD detection in fine-grained scenarios remains largely underexplored. In this work, we aim to fill this gap by first carefully constructing four large-scale fine-grained test environments, in which existing methods are shown to have difficulties. Particularly, we find that even explicitly incorporating a diverse set of auxiliary outlier data during training does not provide sufficient coverage over the broad region where fine-grained OOD samples locate. We then propose Mixture Outlier Exposure (MixOE), which mixes ID data and training outliers to expand the coverage of different OOD granularities, and trains the model such that the prediction confidence linearly decays as the input transitions from ID to OOD. Extensive experiments and analyses demonstrate the effectiveness of MixOE for building up OOD detector in fine-grained environments. The code is available at https://github.com/zjysteven/MixOE.

- arXivSIO: Synthetic In-Distribution Data Benefits Out-of-Distribution DetectionJingyang Zhang , Nathan Inkawhich , Randolph Linderman, Ryan Luley , Yiran Chen , and Hai LiJan 2023

Building up reliable Out-of-Distribution (OOD) detectors is challenging, often requiring the use of OOD data during training. In this work, we develop a data-driven approach which is distinct and complementary to existing works: Instead of using external OOD data, we fully exploit the internal in-distribution (ID) training set by utilizing generative models to produce additional synthetic ID images. The classifier is then trained using a novel objective that computes weighted loss on real and synthetic ID samples together. Our training framework, which is termed SIO, serves as a "plug-and-play" technique that is designed to be compatible with existing and future OOD detection algorithms, including the ones that leverage available OOD training data. Our experiments on CIFAR-10, CIFAR-100, and ImageNet variants demonstrate that SIO consistently improves the performance of nearly all state-of-the-art (SOTA) OOD detection algorithms. For instance, on the challenging CIFAR-10 v.s. CIFAR-100 detection problem, SIO improves the average OOD detection AUROC of 18 existing methods from 86.25% to 89.04% and achieves a new SOTA of 92.94% according to the OpenOOD benchmark. Code is available.